Can DeFi survive an era in which an AI can find a dozen critical security bugs in a smart contract for just $1.22 in tokens?

That’s how much it cost Anthropic researchers on average to run previously exploited contracts through major LLM models. They discovered that more than half of the exploits in 2025 could have been found and autonomously carried out by AI agents.

AI tools are also able to quickly find security holes and weak points in infrastructure and governance too.

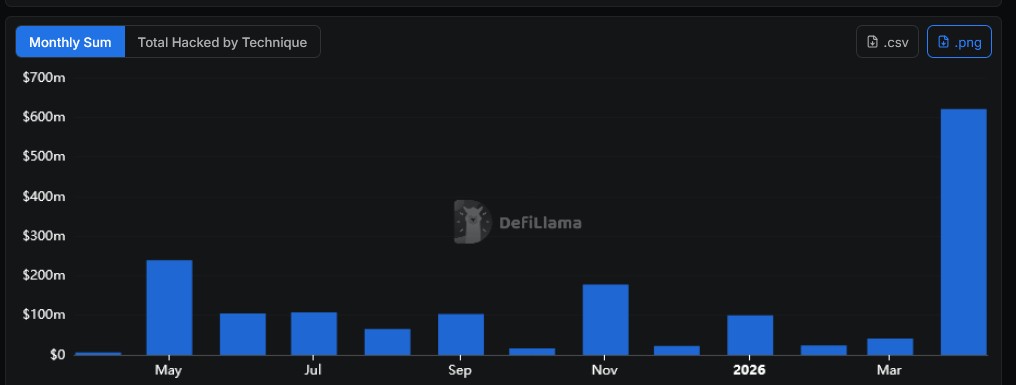

DeFi’s future is under a dark cloud right now, with more than a dozen platforms attacked since the start of April according to DeFiLlama, and $605 million drained.

The month began with the $285 million hack of Drift Protocol — a combination of social engineering and malware — followed in short order by Silo Finance (misconfigured oracle), Aethir (access control exploit), Rhea Finance (fake token contracts) and Volo Vault (compromised key) among other attacks.

The most devastating attack came on the weekend, when a hacker drained $290 million from KelpDAO’s LayerZero-based reETH bridge. It caused ripples across the ecosystem, with more than 30 protocols pausing some functions. Aave was among the hardest hit with up to $200 million in bad debt, despite its own industry-leading security standards. The incident suggests that a DeFi platform’s integrity may only be as good as the weakest protocol it interacts with.

Jefferies digital asset analyst Andrew Moss said that the KelpDAO attack threatened Wall Street’s recent embrace of the sector.

“The potential loss of trust poses both near — and longer-term risks regardless of who is to blame,” analyst Andrew Moss wrote. “Although we don’t expect TradFi firms to throw in the crypto towel, the rollout or expansion of tokenization initiatives across banks, asset managers, fintechs and payments may decelerate temporarily.”

Unfortunately, it doesn’t look like the threat will abate any time soon. Polymarket is currently pricing in the chance of another $100 million crypto hack this year at 76%.

Was AI even involved in April’s DeFi hacks?

None of the attacks in April have been conclusively linked to AI-identified exploits — with the biggest targeting infrastructure or governance rather than smart contracts — but many are convinced there is a link.

“I think this is AI,” posted Bankless host Ryan Sean Adams after the Kelp DAO exploit. “AI giving hackers dark superpowers. Defense has to catch up now — we’re out of time.”

Early NEAR contributor turned independent researcher Vadim also blamed AIs for a surge in exploits. He posted that smart contract bugs have been lying in plain sight all along, but the cost of finding them was too high — until now.

“AI collapsed the cost of code analysis. Finding exploits got 100x cheaper. Writing flawless code stayed just as expensive,” he wrote.

“Use AI to find an exploit, test it on a fork, and if it works — the risk of getting caught is near zero.”

Quantstamp founder Richard Ma tells Magazine that AI discovering exploits is a “growing problem” for the sector.

“It’s been growing at a fast pace especially these last 6 months as AI tools for cyberattacks are getting more mature,” he says. “The attackers have a lot to gain and they have dedicated teams.”

“AI is being used because AI is a lot more scalable. You can throw compute at it instead of manpower and reap outsized rewards as an attacker.”

Ma says that AI tools like Claude Code are used legitimately to identify bugs and exploits so that developers can fix code before release. But those same tools can be used to identify security holes in already deployed contracts.

“You can simply use normal versions of the LLMs to directly identify bugs,” he says. “There’s no guardrails on bug-finding.”

So why aren’t DeFi platforms using these tools to find the bugs in their own platforms?

“They should,” he says. “I’d advise caution using DeFi platforms now until they catch up.”

Research shows AI is very good at finding exploits

Researchers from Anthropic tested the major models in December last year on 405 smart contracts that had been previously exploited. The LLMs found $4.6 million worth of exploits. Worryingly, the amount of dollars the AIs were able to extract was growing exponentially.

Read also

“Over the last year, frontier models’ exploit revenue on the 2025 problems doubled roughly every 1.3 months,” the researchers wrote, adding it cost just $1.22 in tokens on average for an AI to scan a contract exhaustively looking for vulnerabilities.

“More than half of the blockchain exploits carried out in 2025—presumably by skilled human attackers—could have been executed autonomously by current AI agents.”

The models tested were less sophisticated and capable than Anthropic’s unreleased Mythos model. In testing, Mythos identified thousands of previously unknown zero day vulnerabilities, including a 27-year-old bug in OpenBSD and a 16-year-old bug in FFmpeg. Anthropic has given early access to more than 40 large organizations, including AWS, Apple, Google, Microsoft and others, so they can find critical bugs and patch them ahead of the tech becoming publicly available.

Anthropic has yet to give access to a single crypto project, although Coinbase is reportedly hammering on their door trying to join the program.

Specialized AI is even better at finding exploits

Separately, researchers from the University College London and the University of Sydney tested out the capabilities of the specialized A1 agentic system. It provides agents with six tools to help them understand smart contract behavior, and testing strategies on real blockchain states, among other things.

Their mid-2025 paper found the system had a 63% success rate across 23 tested real-world vulnerable contracts and was able to extract $9.33 million.

The real sting in the tail was their conclusion that it costs more to defend against AI exploits than it does to create them.

“Our economic analysis reveals a troubling asymmetry: attackers achieve profitability at $6000 exploit values while defenders require $60,000 — raising fundamental questions about whether AI agents inevitably favor exploitation over defense.”

Read also

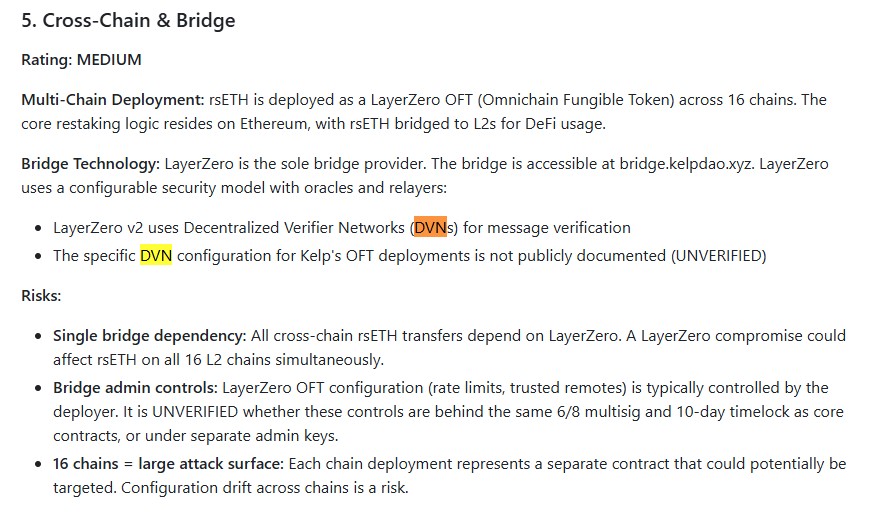

KelpDAO was not a smart contract exploit

As it happens, it wasn’t the smart contracts that were exploited in the Kelp DAO attack but the RPC server sitting underneath LayerZero’s Decentralized Verifier Network. Ma says it’s poor cybersecurity to have a system with a single point of failure.

“The DVN (decentralized verifier network) they used was like 1:1, so it was neither decentralized, nor a network. (It was) just like a single verifier on the bridge.”

Zengineer, a developer at TrueNorth, claims to have run an “AI-assisted security scan on KelpDAO and flagged their LayerZero DVN bridge config as an unresolved risk” 12 days before the hack.

TrueNorth’s audit on KelpDAO, using its bespoke Claude Code skill two weeks ago, did highlight the DVN configuration as a potential risk. But it noted there was an “information gap” about what the configuration actually was. So the tool was unable to flag the 1:1 setup itself as a risk.

However, it highlights how AI can potentially be used to identify and zero in on potential DeFi security gaps outside of protocol logic.

AI can help with bug hunting too

AI assisted bug hunting is definitely one of the most promising tools in DeFi’s arsenal. Cosmos Labs CEO Barry Plunkett said this week that AI had massively increased the number of bugs being reported to the firm’s bug bounty program.

“AI is changing the way that bug bounty programs must operate. Researchers armed with AI tools are submitting massively more valid and invalid submissions to our program than ever before. Our program has seen a 900% increase in submission volume from last year, on the order of 20–50 a day.”

Immunifi reports that 61.4% of projects find a critical bug in the first year of running a program, and 93.3% have found a bug after five years. The average number of critical issues found is two, although one project had 50!

The median bounty is $20,000, while the record $10 million pay out was for a critical bug in the WormHole bridge. Needless to say, if you can find one of those for $1.22 in tokens, that’s a pretty good return.

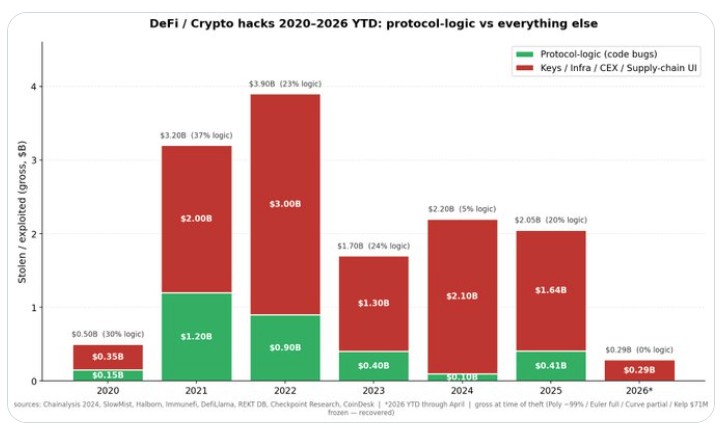

Curve researcher Chado claims that an analysis of DeFi and crypto hacks over the past five years shows the number of exploits blamed on code bugs fell from 37% to under 5% in 2024, suggesting that improved auditing, bug bounties and formal verification are making smart contracts safer.

Formal verification is the difficult answer

Vadim says that in future, DeFi smart contracts will need to be formally verified before they are safe enough to use.

“Assume every contract with a vulnerability will eventually be exploited. The only real defense is formal verification — mathematically proving that the code can only do what it was designed to do, before it ever gets deployed.”

Formal verification would essentially make smart contracts unhackable. Ethereum creator Vitalik Buterin has set the ambitious task of “formally verifying everything” in Ethereum. This used to be so time consuming and difficult that it was impractical, but AI makes it an achievable goal.

“We’ve also begun actively applying artificial intelligence to generate code proofs demonstrating that the software version running Ethereum does indeed possess the characteristics it’s supposed to have,” he told the Hong Kong Web3 Carnival this week.

“We’ve made progress that was impossible two years ago. Artificial intelligence is developing rapidly, so we’re leveraging this to pursue ultimate simplicity, keeping long-term protocols as simple as possible, and preparing for the future as much as possible.”

Social engineering remains a threat

But even after all the bugs have been weeded out of smart contracts, the humans in charge will remain the vulnerable part of the system. AI can be used to manipulate them too, using deepfakes and data mining. The Drift hack required six months of social engineering just to deploy the malware.

“In these times, smart contracts that have been audited are far safer than the operations around these DeFi platforms, especially operations that have key man risk susceptible to AI social engineering attempts,” Ma says.

“Most DeFi platforms intentionally obfuscate their operations on the human-side in terms of multisig holders and admins and basically it’s this human part that is being targeted right now.”

Subscribe

The most engaging reads in blockchain. Delivered once a

week.

Andrew Fenton

Andrew Fenton is a writer and editor at Cointelegraph with more than 25 years of experience in journalism and has been covering cryptocurrency since 2018. He spent a decade working for News Corp Australia, first as a film journalist with The Advertiser in Adelaide, then as deputy editor and entertainment writer in Melbourne for the nationally syndicated entertainment lift-outs Hit and Switched On, published in the Herald Sun, Daily Telegraph and Courier Mail. He interviewed stars including Leonardo DiCaprio, Cameron Diaz, Jackie Chan, Robin Williams, Gerard Butler, Metallica and Pearl Jam. Prior to that, he worked as a journalist with Melbourne Weekly Magazine and The Melbourne Times, where he won FCN Best Feature Story twice. His freelance work has been published by CNN International, Independent Reserve, Escape and Adventure.com, and he has worked for 3AW and Triple J. He holds a degree in Journalism from RMIT University and a Bachelor of Letters from the University of Melbourne. Andrew holds ETH, BTC, VET, SNX, LINK, AAVE, UNI, AUCTION, SKY, TRAC, RUNE, ATOM, OP, NEAR and FET above Cointelegraph’s disclosure threshold of $1,000.

Disclaimer

Cointelegraph Magazine publishes long-form journalism, analysis and narrative reporting produced by Cointelegraph’s in-house editorial team with subject-matter expertise.

All articles are edited and reviewed by Cointelegraph editors in line with our editorial standards.

Content published in Magazine does not constitute financial, legal or investment advice. Readers should conduct their own research and consult qualified professionals where appropriate. Cointelegraph maintains full editorial independence.