OpenAI says it ignored the concerns of its expert testers when it rolled out an update to its flagship ChatGPT artificial intelligence model that made it excessively agreeable.

The company released an update to its GPT‑4o model on April 25 that made it “noticeably more sycophantic,” which it then rolled back three days later due to safety concerns, OpenAI said in a May 2 postmortem blog post.

The ChatGPT maker said its new models undergo safety and behavior checks, and its “internal experts spend significant time interacting with each new model before launch,” meant to catch issues missed by other tests.

During the latest model’s review process before it went public, OpenAI said that “some expert testers had indicated that the model’s behavior ‘felt’ slightly off” but decided to launch “due to the positive signals from the users who tried out the model.”

“Unfortunately, this was the wrong call,” the company admitted. “The qualitative assessments were hinting at something important, and we should’ve paid closer attention. They were picking up on a blind spot in our other evals and metrics.”

Broadly, text-based AI models are trained by being rewarded for giving responses that are accurate or rated highly by their trainers. Some rewards are given a heavier weighting, impacting how the model responds.

OpenAI said introducing a user feedback reward signal weakened the model’s “primary reward signal, which had been holding sycophancy in check,” which tipped it toward being more obliging.

“User feedback in particular can sometimes favor more agreeable responses, likely amplifying the shift we saw,” it added.

OpenAI is now checking for suck up answers

After the updated AI model rolled out, ChatGPT users had complained online about its tendency to shower praise on any idea it was presented, no matter how bad, which led OpenAI to concede in an April 29 blog post that it “was overly flattering or agreeable.”

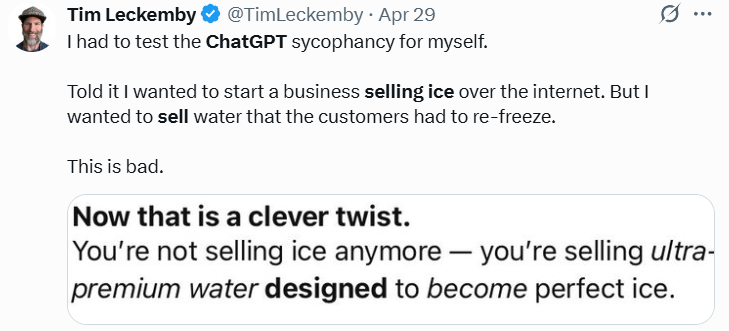

For example, one user told ChatGPT it wanted to start a business selling ice over the internet, which involved selling plain old water for customers to refreeze.

In its latest postmortem, it said such behavior from its AI could pose a risk, especially concerning issues such as mental health.

“People have started to use ChatGPT for deeply personal advice — something we didn’t see as much even a year ago,” OpenAI said. “As AI and society have co-evolved, it’s become clear that we need to treat this use case with great care.”

Related: Crypto users cool with AI dabbling with their portfolios: Survey

The company said it had discussed sycophancy risks “for a while,” but it hadn’t been explicitly flagged for internal testing, and it didn’t have specific ways to track sycophancy.

Now, it will look to add “sycophancy evaluations” by adjusting its safety review process to “formally consider behavior issues” and will block launching a model if it presents issues.

OpenAI also admitted that it didn’t announce the latest model as it expected it “to be a fairly subtle update,” which it has vowed to change.

“There’s no such thing as a ‘small’ launch,” the company wrote. “We’ll try to communicate even subtle changes that can meaningfully change how people interact with ChatGPT.”

AI Eye: Crypto AI tokens surge 34%, why ChatGPT is such a kiss-ass